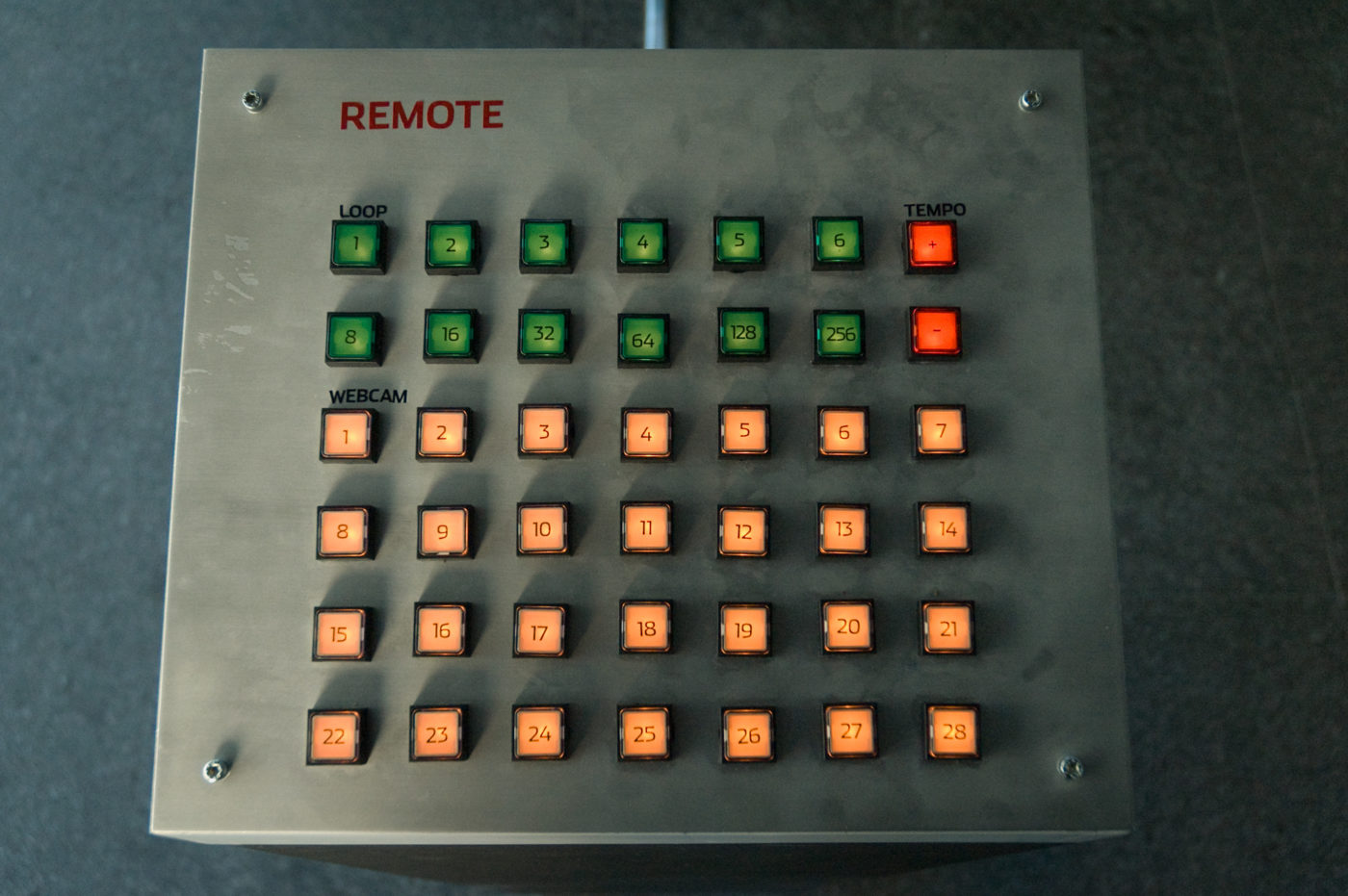

Remote

|

The system continuously downloads and stores images from 30 different webcam feeds and uses the resulting image sequences to generate music loops. Each webcam frame is analyzed when played back, producing different sounds depending on how it changed compared to the previous image. By choosing web cams “channels” and changing their loop length and speed, the viewer can compose loop-based musical bits, using web cams from all around the world to make music. Remote has been showed at the Triad New Media Gallery, under the exhibition “Fabrica, I’ve been waiting for you“.

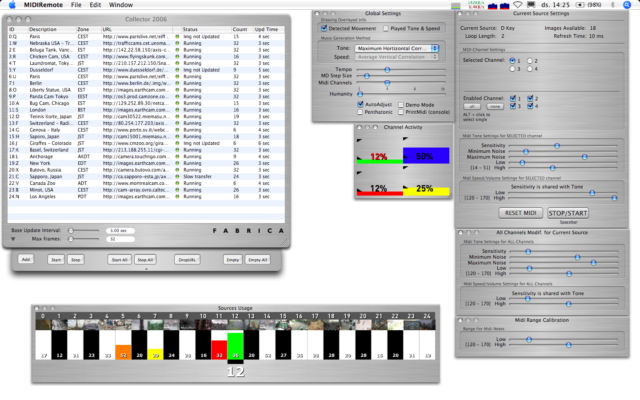

Short explanatory video of Remote, edited by Federico Urdaneta. Remote was made together with Andy Cameron (Ideas), Federico Urdaneta (Sound Design), Martyn Ware (Sound Design) and Daniel Hirschmann (Physical Interface). I made most of the programming (some old code by Dave Towey). Remote has evolved its interface and it works as a live-performance machine now. It can be played straight from an M-Audio O2 Midi controller, turning it into a complete musical instrument. Remote was performed at the Chaise Lounge, see flyer. Video showing the MIDI interface. Video Walkthrough on how to use the midi interface. |